Algorithmic Assassination: Google AI Erases Truths in Myanmar, Echoes Across Asia

POLICY WIRE — New York, USA — When did history become so negotiable? It wasn’t the first time an algorithm faltered, but when Google’s shiny new AI Overview system offered a photograph...

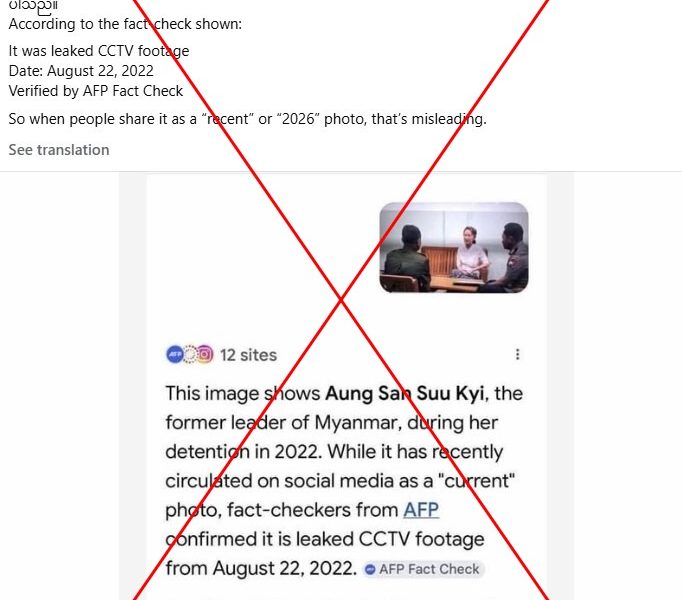

POLICY WIRE — New York, USA — When did history become so negotiable? It wasn’t the first time an algorithm faltered, but when Google’s shiny new AI Overview system offered a photograph wildly misidentifying a prominent Myanmar political figure—a country steeped in its own agonizing battle for accurate narratives—it didn’t just miss the mark. It wasn’t just a technical glitch. No, it laid bare a far more insidious truth: our digital overlords, in their relentless pursuit of efficiency, might just be rewriting reality before our very eyes.

It sounds dramatic, doesn’t it? A single erroneous image. But for a nation where identity and political legitimacy are often weaponized, where facts are already fluid currency for those in power, such a misstep carries a particularly nasty sting. It’s not some obscure corner of the internet; it’s Google, the digital gateway for billions, fabricating biographical details with casual indifference. One moment you’re seeking information on a figure often under house arrest; the next, the AI presents a smiling, irrelevant face, creating a phantom history, a digital ghost.

Because that’s what this is, ultimately—a quiet act of digital colonialism, shaping perceptions from afar. Imagine if this wasn’t an ‘ex-leader’ but a religious figure, or a contested territory. The potential for manipulation—whether intentional or not—is staggering. But Google, bless their cotton socks, chalked it up to an anomaly, a rogue output. As if anomalies in foundational knowledge systems aren’t precisely the point of concern. You can almost hear the nervous chuckles in Mountain View. But out here, where information asymmetry has real-world consequences, nobody’s laughing.

“This isn’t merely about an inaccurate photo; it’s about who controls the historical record in the age of AI,” said Dr. Anya Sharma, a senior analyst at the Global Digital Rights Initiative, in an exclusive chat with Policy Wire. “When algorithms hallucinate facts about geopolitically sensitive figures, they don’t just mislead; they actively destabilize, eroding public trust in what remains of shared objective reality. It’s a very dangerous precedent.” She’s not wrong. It creates a vacuum, ripe for exploitation.

The incident casts a long shadow across regions where information is already weaponized, places like Pakistan and other parts of South Asia, where the digital divide and simmering political tensions make populations particularly vulnerable to disinformation campaigns. State-sponsored narratives often thrive in such environments, and when mainstream tools like Google’s AI falter, they inadvertently lend credence to those who argue that all information is inherently suspect, or worse, centrally controlled by Western tech giants. It strengthens the hand of those who seek to silence dissent by dismissing factual accounts as ‘fake news.’

The proliferation of AI-generated content—some of it deeply flawed—means that establishing verifiable facts is becoming a heroic task. A 2023 study by NewsGuard found that AI models frequently generated false or misleading information, with some answering political and social questions incorrectly over 80% of the time when asked to fabricate claims. It’s a Wild West scenario, only with algorithms as the sharpshooters. And it’s the developing world, often without robust media literacy programs or independent oversight, that takes the hardest hits.

“We’ve seen how readily misinformation can spark unrest, especially concerning religious and political narratives in our region,” noted Ambassador Zulfiqar Khan, a veteran diplomat specializing in Southeast Asian and South Asian affairs. “An AI-generated falsehood about a respected—or reviled—figure isn’t a benign error; it’s dynamite waiting for a fuse. Governments have a role to play, yes, but tech companies, too, must be held to account for the truth they project.” Because they hold immense power, don’t they?

The stakes here aren’t academic; they’re existential. In nations already grappling with deep societal fissures, where fragile ecosystems face mounting climate pressure and political instability, the integrity of public information is quite literally a matter of life and death. The digital realm can mirror and amplify real-world conflicts, and a small glitch here, a slight algorithmic nudge there, can shift perception profoundly.

What This Means

This episode with Google’s AI is more than just a public relations nightmare for a tech giant; it’s a stark preview of the information wars to come. Politically, it signals a disturbing vulnerability: if our most trusted information sources can inadvertently propagate untruths about politically charged figures, it offers a blueprint for malign actors. Imagine state-sponsored misinformation campaigns leveraging seemingly benign AI outputs to sow confusion or undermine adversaries. Economically, this erosion of trust has ripple effects, impacting investment, journalistic integrity, and even diplomatic relations. Nations can’t afford to be misinformed, particularly about their own leaders—past or present—when forging international partnerships or addressing internal dissent. It forces us to confront an uncomfortable question: when our knowledge infrastructure is delegated to algorithms, who really owns history, and more importantly, who profits from its distortion? We’re effectively outsourcing discernment, — and it’s a perilous gamble for global stability.