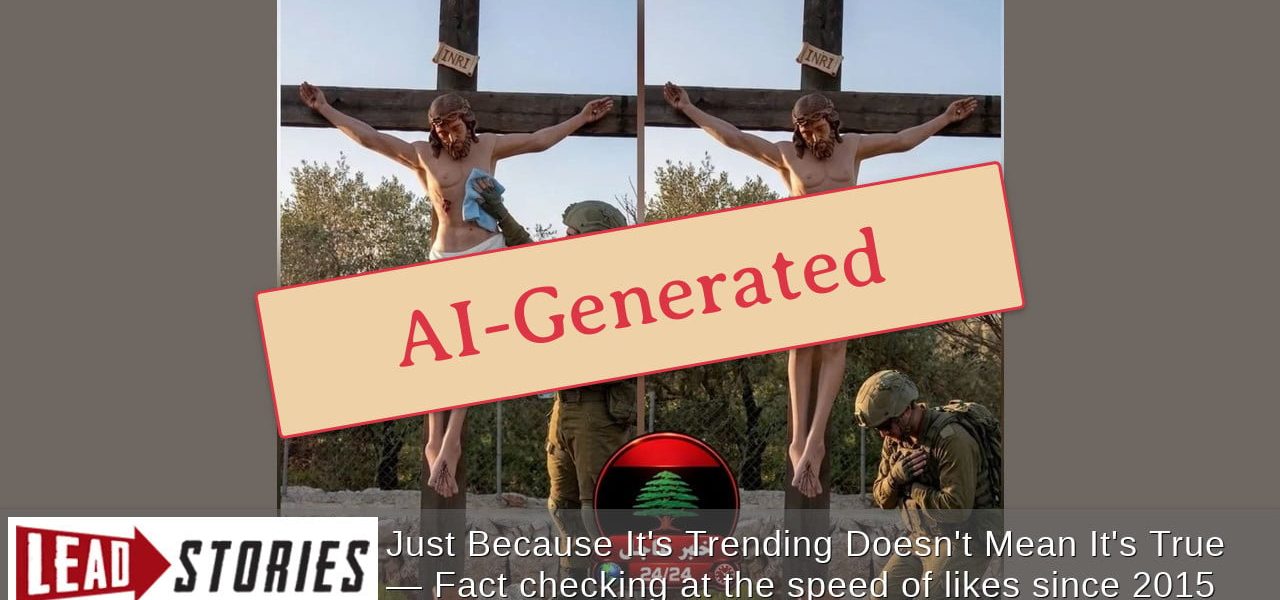

Fact Check: Viral Images of IDF Soldier Repairing Crucifixion Statue Are AI-Generated Fakes

POLICY WIRE — Global — Widespread social media claims portraying an Israeli Defense Forces (IDF) soldier mending a vandalized crucifixion statue have been definitively debunked as products of...

POLICY WIRE — Global — Widespread social media claims portraying an Israeli Defense Forces (IDF) soldier mending a vandalized crucifixion statue have been definitively debunked as products of artificial intelligence (AI).

These striking visuals, which depicted an IDF serviceman seemingly repairing a religious icon after an alleged act of desecration, rapidly gained traction across various platforms. The images emerged amidst heightened sensitivities surrounding conflicts in the region, fueling outrage and confusion among online users.

However, meticulous examination by fact-checking organizations and digital forensics specialists has conclusively proven the inauthenticity of these viral photographs. Experts point to tell-tale signs characteristic of synthetic media, including distorted anatomical features, inconsistent lighting, and unusual textures, all indicative of AI generation.

The Spread of AI Misinformation

The fabricated narrative accompanying these images, suggesting a two-part event of vandalism followed by an attempt at restoration by an IDF soldier, is entirely without factual basis. This incident serves as a stark reminder of the escalating challenge posed by misinformation and disinformation propagated through advanced AI technologies.

“The ease with which convincing, yet entirely false, narratives can be manufactured and disseminated using AI tools underscores a critical vulnerability in our information ecosystem. Users must remain vigilant and apply critical thinking to all content encountered online.”

This situation highlights the broader societal impact of digitally manipulated content, capable of inflaming tensions and distorting public perception. The rapid spread of such imagery necessitates a proactive approach to media literacy and source verification.

Read More: Massive ‘Ghost Cake’ Delivery Scandal Uncovered in China: Consumer Fraud & Regulatory Crackdown

Verifying Online Content

As AI tools become more sophisticated, distinguishing between genuine and fabricated content grows increasingly difficult for the average internet user. Experts recommend cross-referencing information with reputable news sources and utilizing reverse image search tools to ascertain origin and authenticity.

The spread of unverified information regarding the IDF and regional events is a recurring issue. For instance, reports have also surfaced about female IDF reservists boycotting service following sexual harassment allegations, underscoring the complexities of reporting from the conflict zone.

The global community continues to grapple with the ethical implications and regulatory challenges presented by the widespread availability of AI generation tools. Educating the public on recognizing AI-produced fakes is paramount in maintaining a reliable information landscape.